|

Tizen Native API

7.0

|

The NNStreamer function provides interfaces to create and execute stream pipelines with neural networks and sensors.

Required Header

#include <nnstreamer/nnstreamer.h>

Overview

The NNStreamer function provides interfaces to create and execute stream pipelines with neural networks and sensors.

This function allows the following operations with NNStreamer:

- Create a stream pipeline with NNStreamer plugins, GStreamer plugins, and sensor/camera/mic inputs.

- Interfaces to push data to the pipeline from the application.

- Interfaces to pull data from the pipeline to the application.

- Interfaces to start/stop/destroy the pipeline.

- Interfaces to control switches and valves in the pipeline.

- Utility functions to handle data from the pipeline.

Note that this function set is supposed to be thread-safe.

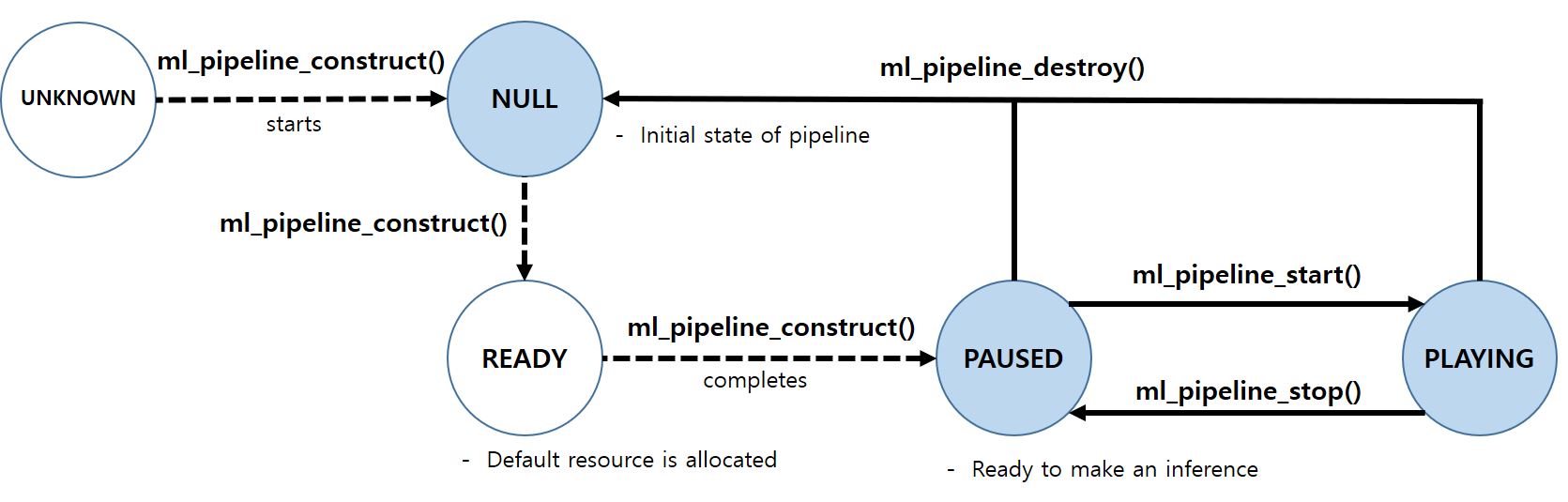

Pipeline State Diagram

Related Features

This function is related with the following features:

- http://tizen.org/feature/machine_learning

- http://tizen.org/feature/machine_learning.inference

It is recommended to probe features in your application for reliability.

You can check if a device supports the related features for this function by using System Information, thereby controlling the procedure of your application.

To ensure your application is only running on the device with specific features, please define the features in your manifest file using the manifest editor in the SDK.

For example, your application accesses to the camera device, then you have to add 'http://tizen.org/privilege/camera' into the manifest of your application.

More details on featuring your application can be found from Feature Element.

Functions | |

| int | ml_pipeline_construct (const char *pipeline_description, ml_pipeline_state_cb cb, void *user_data, ml_pipeline_h *pipe) |

| Constructs the pipeline (GStreamer + NNStreamer). | |

| int | ml_pipeline_destroy (ml_pipeline_h pipe) |

| Destroys the pipeline. | |

| int | ml_pipeline_get_state (ml_pipeline_h pipe, ml_pipeline_state_e *state) |

| Gets the state of pipeline. | |

| int | ml_pipeline_start (ml_pipeline_h pipe) |

| Starts the pipeline, asynchronously. | |

| int | ml_pipeline_stop (ml_pipeline_h pipe) |

| Stops the pipeline, asynchronously. | |

| int | ml_pipeline_flush (ml_pipeline_h pipe, bool start) |

| Clears all data and resets the pipeline. | |

| int | ml_pipeline_sink_register (ml_pipeline_h pipe, const char *sink_name, ml_pipeline_sink_cb cb, void *user_data, ml_pipeline_sink_h *sink_handle) |

| Registers a callback for sink node of NNStreamer pipelines. | |

| int | ml_pipeline_sink_unregister (ml_pipeline_sink_h sink_handle) |

| Unregisters a callback for sink node of NNStreamer pipelines. | |

| int | ml_pipeline_src_get_handle (ml_pipeline_h pipe, const char *src_name, ml_pipeline_src_h *src_handle) |

| Gets a handle to operate as a src node of NNStreamer pipelines. | |

| int | ml_pipeline_src_release_handle (ml_pipeline_src_h src_handle) |

| Releases the given src handle. | |

| int | ml_pipeline_src_input_data (ml_pipeline_src_h src_handle, ml_tensors_data_h data, ml_pipeline_buf_policy_e policy) |

| Adds an input data frame. | |

| int | ml_pipeline_src_set_event_cb (ml_pipeline_src_h src_handle, ml_pipeline_src_callbacks_s *cb, void *user_data) |

| Sets the callbacks which will be invoked when a new input frame may be accepted. | |

| int | ml_pipeline_src_get_tensors_info (ml_pipeline_src_h src_handle, ml_tensors_info_h *info) |

| Gets a handle for the tensors information of given src node. | |

| int | ml_pipeline_switch_get_handle (ml_pipeline_h pipe, const char *switch_name, ml_pipeline_switch_e *switch_type, ml_pipeline_switch_h *switch_handle) |

| Gets a handle to operate a "GstInputSelector"/"GstOutputSelector" node of NNStreamer pipelines. | |

| int | ml_pipeline_switch_release_handle (ml_pipeline_switch_h switch_handle) |

| Releases the given switch handle. | |

| int | ml_pipeline_switch_select (ml_pipeline_switch_h switch_handle, const char *pad_name) |

| Controls the switch with the given handle to select input/output nodes(pads). | |

| int | ml_pipeline_switch_get_pad_list (ml_pipeline_switch_h switch_handle, char ***list) |

| Gets the pad names of a switch. | |

| int | ml_pipeline_valve_get_handle (ml_pipeline_h pipe, const char *valve_name, ml_pipeline_valve_h *valve_handle) |

| Gets a handle to operate a "GstValve" node of NNStreamer pipelines. | |

| int | ml_pipeline_valve_release_handle (ml_pipeline_valve_h valve_handle) |

| Releases the given valve handle. | |

| int | ml_pipeline_valve_set_open (ml_pipeline_valve_h valve_handle, bool open) |

| Controls the valve with the given handle. | |

| int | ml_pipeline_element_get_handle (ml_pipeline_h pipe, const char *element_name, ml_pipeline_element_h *elem_h) |

| Gets an element handle in NNStreamer pipelines to control its properties. | |

| int | ml_pipeline_element_release_handle (ml_pipeline_element_h elem_h) |

| Releases the given element handle. | |

| int | ml_pipeline_element_set_property_bool (ml_pipeline_element_h elem_h, const char *property_name, const int32_t value) |

| Sets the boolean value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_string (ml_pipeline_element_h elem_h, const char *property_name, const char *value) |

| Sets the string value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_int32 (ml_pipeline_element_h elem_h, const char *property_name, const int32_t value) |

| Sets the integer value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_int64 (ml_pipeline_element_h elem_h, const char *property_name, const int64_t value) |

| Sets the integer 64bit value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_uint32 (ml_pipeline_element_h elem_h, const char *property_name, const uint32_t value) |

| Sets the unsigned integer value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_uint64 (ml_pipeline_element_h elem_h, const char *property_name, const uint64_t value) |

| Sets the unsigned integer 64bit value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_double (ml_pipeline_element_h elem_h, const char *property_name, const double value) |

| Sets the floating point value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_set_property_enum (ml_pipeline_element_h elem_h, const char *property_name, const uint32_t value) |

| Sets the enumeration value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_bool (ml_pipeline_element_h elem_h, const char *property_name, int32_t *value) |

| Gets the boolean value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_string (ml_pipeline_element_h elem_h, const char *property_name, char **value) |

| Gets the string value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_int32 (ml_pipeline_element_h elem_h, const char *property_name, int32_t *value) |

| Gets the integer value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_int64 (ml_pipeline_element_h elem_h, const char *property_name, int64_t *value) |

| Gets the integer 64bit value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_uint32 (ml_pipeline_element_h elem_h, const char *property_name, uint32_t *value) |

| Gets the unsigned integer value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_uint64 (ml_pipeline_element_h elem_h, const char *property_name, uint64_t *value) |

| Gets the unsigned integer 64bit value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_double (ml_pipeline_element_h elem_h, const char *property_name, double *value) |

| Gets the floating point value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_element_get_property_enum (ml_pipeline_element_h elem_h, const char *property_name, uint32_t *value) |

| Gets the enumeration value of element's property in NNStreamer pipelines. | |

| int | ml_pipeline_tensor_if_custom_register (const char *name, ml_pipeline_if_custom_cb cb, void *user_data, ml_pipeline_if_h *if_custom) |

| Registers a tensor_if custom callback. | |

| int | ml_pipeline_tensor_if_custom_unregister (ml_pipeline_if_h if_custom) |

| Unregisters the tensor_if custom callback. | |

| int | ml_check_nnfw_availability (ml_nnfw_type_e nnfw, ml_nnfw_hw_e hw, bool *available) |

| Checks the availability of the given execution environments. | |

| int | ml_check_nnfw_availability_full (ml_nnfw_type_e nnfw, ml_nnfw_hw_e hw, const char *custom_option, bool *available) |

| Checks the availability of the given execution environments with custom option. | |

| int | ml_check_element_availability (const char *element_name, bool *available) |

| Checks if the element is registered and available on the pipeline. | |

| int | ml_pipeline_custom_easy_filter_register (const char *name, const ml_tensors_info_h in, const ml_tensors_info_h out, ml_custom_easy_invoke_cb cb, void *user_data, ml_custom_easy_filter_h *custom) |

| Registers a custom filter. | |

| int | ml_pipeline_custom_easy_filter_unregister (ml_custom_easy_filter_h custom) |

| Unregisters the custom filter. | |

Typedefs | |

| typedef void * | ml_pipeline_h |

| A handle of an NNStreamer pipeline. | |

| typedef void * | ml_pipeline_sink_h |

| A handle of a "sink node" of an NNStreamer pipeline. | |

| typedef void * | ml_pipeline_src_h |

| A handle of a "src node" of an NNStreamer pipeline. | |

| typedef void * | ml_pipeline_switch_h |

| A handle of a "switch" of an NNStreamer pipeline. | |

| typedef void * | ml_pipeline_valve_h |

| A handle of a "valve node" of an NNStreamer pipeline. | |

| typedef void * | ml_pipeline_element_h |

| A handle of a common element (i.e. All GstElement except AppSrc, AppSink, TensorSink, Selector and Valve) of an NNStreamer pipeline. | |

| typedef void * | ml_custom_easy_filter_h |

| A handle of a "custom-easy filter" of an NNStreamer pipeline. | |

| typedef void * | ml_pipeline_if_h |

| A handle of a "if node" of an NNStreamer pipeline. | |

| typedef void(* | ml_pipeline_sink_cb )(const ml_tensors_data_h data, const ml_tensors_info_h info, void *user_data) |

| Callback for sink element of NNStreamer pipelines (pipeline's output). | |

| typedef void(* | ml_pipeline_state_cb )(ml_pipeline_state_e state, void *user_data) |

| Callback for the change of pipeline state. | |

| typedef int(* | ml_pipeline_if_custom_cb )(const ml_tensors_data_h data, const ml_tensors_info_h info, int *result, void *user_data) |

| Callback for custom condition of tensor_if. | |

Defines | |

| #define | ML_TIZEN_CAM_VIDEO_SRC "tizencamvideosrc" |

| The virtual name to set the video source of camcorder in Tizen. | |

| #define | ML_TIZEN_CAM_AUDIO_SRC "tizencamaudiosrc" |

| The virtual name to set the audio source of camcorder in Tizen. | |

Define Documentation

| #define ML_TIZEN_CAM_AUDIO_SRC "tizencamaudiosrc" |

The virtual name to set the audio source of camcorder in Tizen.

If an application needs to access the recorder device to construct the pipeline, set the virtual name as an audio source element. Note that you have to add 'http://tizen.org/privilege/recorder' into the manifest of your application.

- Since :

- 5.5

| #define ML_TIZEN_CAM_VIDEO_SRC "tizencamvideosrc" |

The virtual name to set the video source of camcorder in Tizen.

If an application needs to access the camera device to construct the pipeline, set the virtual name as a video source element. Note that you have to add 'http://tizen.org/privilege/camera' into the manifest of your application.

- Since :

- 5.5

Typedef Documentation

| typedef void* ml_custom_easy_filter_h |

A handle of a "custom-easy filter" of an NNStreamer pipeline.

- Since :

- 6.0

| typedef void* ml_pipeline_element_h |

A handle of a common element (i.e. All GstElement except AppSrc, AppSink, TensorSink, Selector and Valve) of an NNStreamer pipeline.

- Since :

- 6.0

| typedef void* ml_pipeline_h |

A handle of an NNStreamer pipeline.

- Since :

- 5.5

| typedef int(* ml_pipeline_if_custom_cb)(const ml_tensors_data_h data, const ml_tensors_info_h info, int *result, void *user_data) |

Callback for custom condition of tensor_if.

- Since :

- 6.5

- Remarks:

- The data can be used only in the callback. To use outside, make a copy.

- The info can be used only in the callback. To use outside, make a copy.

- The result can be used only in the callback and should not be released.

- Parameters:

-

[in] data The handle of the tensor output of the pipeline (a single frame. tensor/tensors). Number of tensors is determined by ml_tensors_info_get_count() with the handle 'info'. Note that the maximum number of tensors is 16 (ML_TENSOR_SIZE_LIMIT). [in] info The handle of tensors information (cardinality, dimension, and type of given tensor/tensors). [out] result Result of the user-defined condition. 0 refers to FALSE and a non-zero value refers to TRUE. The application should set the result value for given data. [in,out] user_data User application's private data.

- Returns:

0on success. Otherwise a negative error value.

| typedef void* ml_pipeline_if_h |

A handle of a "if node" of an NNStreamer pipeline.

- Since :

- 6.5

| typedef void(* ml_pipeline_sink_cb)(const ml_tensors_data_h data, const ml_tensors_info_h info, void *user_data) |

Callback for sink element of NNStreamer pipelines (pipeline's output).

If an application wants to accept data outputs of an NNStreamer stream, use this callback to get data from the stream. Note that the buffer may be deallocated after the return and this is synchronously called. Thus, if you need the data afterwards, copy the data to another buffer and return fast. Do not spend too much time in the callback. It is recommended to use very small tensors at sinks.

- Since :

- 5.5

- Remarks:

- The data can be used only in the callback. To use outside, make a copy.

- The info can be used only in the callback. To use outside, make a copy.

- Parameters:

-

[in] data The handle of the tensor output of the pipeline (a single frame. tensor/tensors). Number of tensors is determined by ml_tensors_info_get_count() with the handle 'info'. Note that the maximum number of tensors is 16 (ML_TENSOR_SIZE_LIMIT). [in] info The handle of tensors information (cardinality, dimension, and type of given tensor/tensors). [in,out] user_data User application's private data.

| typedef void* ml_pipeline_sink_h |

A handle of a "sink node" of an NNStreamer pipeline.

- Since :

- 5.5

| typedef void* ml_pipeline_src_h |

A handle of a "src node" of an NNStreamer pipeline.

- Since :

- 5.5

| typedef void(* ml_pipeline_state_cb)(ml_pipeline_state_e state, void *user_data) |

Callback for the change of pipeline state.

If an application wants to get the change of pipeline state, use this callback. This callback can be registered when constructing the pipeline using ml_pipeline_construct(). Do not spend too much time in the callback.

- Since :

- 5.5

- Parameters:

-

[in] state The new state of the pipeline. [out] user_data User application's private data.

| typedef void* ml_pipeline_switch_h |

A handle of a "switch" of an NNStreamer pipeline.

- Since :

- 5.5

| typedef void* ml_pipeline_valve_h |

A handle of a "valve node" of an NNStreamer pipeline.

- Since :

- 5.5

Enumeration Type Documentation

Enumeration for buffer deallocation policies.

- Since :

- 5.5

- Enumerator:

ML_PIPELINE_BUF_POLICY_AUTO_FREE Default. Application should not deallocate this buffer. NNStreamer will deallocate when the buffer is no more needed.

ML_PIPELINE_BUF_POLICY_DO_NOT_FREE This buffer is not to be freed by NNStreamer (i.e., it's a static object). However, be careful: NNStreamer might be accessing this object after the return of the API call.

ML_PIPELINE_BUF_POLICY_MAX Max size of ml_pipeline_buf_policy_e structure.

ML_PIPELINE_BUF_SRC_EVENT_EOS Trigger End-Of-Stream event for the corresponding appsrc and ignore the given input value. The corresponding appsrc will no longer accept new data after this.

| enum ml_pipeline_state_e |

Enumeration for pipeline state.

The pipeline state is described on Pipeline State Diagram. Refer to https://gstreamer.freedesktop.org/documentation/plugin-development/basics/states.html.

- Since :

- 5.5

| enum ml_pipeline_switch_e |

Function Documentation

| int ml_check_element_availability | ( | const char * | element_name, |

| bool * | available | ||

| ) |

Checks if the element is registered and available on the pipeline.

If the function returns an error, available may not be changed.

- Since :

- 6.5

- Parameters:

-

[in] element_name The name of element. [out] available trueif it's available,falseif it's not available.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful and the environments are available. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_check_nnfw_availability | ( | ml_nnfw_type_e | nnfw, |

| ml_nnfw_hw_e | hw, | ||

| bool * | available | ||

| ) |

Checks the availability of the given execution environments.

If the function returns an error, available may not be changed.

- Since :

- 5.5

- Parameters:

-

[in] nnfw Check if the nnfw is available in the system. Set ML_NNFW_TYPE_ANY to skip checking nnfw. [in] hw Check if the hardware is available in the system. Set ML_NNFW_HW_ANY to skip checking hardware. [out] available trueif it's available,falseif it's not available.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful and the environments are available. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_check_nnfw_availability_full | ( | ml_nnfw_type_e | nnfw, |

| ml_nnfw_hw_e | hw, | ||

| const char * | custom_option, | ||

| bool * | available | ||

| ) |

Checks the availability of the given execution environments with custom option.

If the function returns an error, available may not be changed.

- Since :

- 6.5

- Parameters:

-

[in] nnfw Check if the nnfw is available in the system. Set ML_NNFW_TYPE_ANY to skip checking nnfw. [in] hw Check if the hardware is available in the system. Set ML_NNFW_HW_ANY to skip checking hardware. [in] custom_option Custom option string to check framework and hardware. If an nnstreamer filter plugin needs to handle detailed option for hardware detection, use this parameter. [out] available trueif it's available,falseif it's not available.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful and the environments are available. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_pipeline_construct | ( | const char * | pipeline_description, |

| ml_pipeline_state_cb | cb, | ||

| void * | user_data, | ||

| ml_pipeline_h * | pipe | ||

| ) |

Constructs the pipeline (GStreamer + NNStreamer).

Use this function to create gst_parse_launch compatible NNStreamer pipelines.

- Since :

- 5.5

- Remarks:

- If the function succeeds, pipe handle must be released using ml_pipeline_destroy().

- http://tizen.org/privilege/mediastorage is needed if pipeline_description is relevant to media storage.

- http://tizen.org/privilege/externalstorage is needed if pipeline_description is relevant to external storage.

- http://tizen.org/privilege/camera is needed if pipeline_description accesses the camera device.

- http://tizen.org/privilege/recorder is needed if pipeline_description accesses the recorder device.

- Parameters:

-

[in] pipeline_description The pipeline description compatible with GStreamer gst_parse_launch(). Refer to GStreamer manual or NNStreamer (https://github.com/nnstreamer/nnstreamer) documentation for examples and the grammar. [in] cb The function to be called when the pipeline state is changed. You may set NULL if it's not required. [in] user_data Private data for the callback. This value is passed to the callback when it's invoked. [out] pipe The NNStreamer pipeline handler from the given description.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_PERMISSION_DENIED The application does not have the required privilege to access to the media storage, external storage, microphone, or camera. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. (Pipeline is not negotiated yet.) ML_ERROR_STREAMS_PIPE Pipeline construction is failed because of wrong parameter or initialization failure. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory to construct the pipeline.

- Precondition:

- The pipeline state should be ML_PIPELINE_STATE_UNKNOWN or ML_PIPELINE_STATE_NULL.

- Postcondition:

- The pipeline state will be ML_PIPELINE_STATE_PAUSED in the same thread.

| int ml_pipeline_custom_easy_filter_register | ( | const char * | name, |

| const ml_tensors_info_h | in, | ||

| const ml_tensors_info_h | out, | ||

| ml_custom_easy_invoke_cb | cb, | ||

| void * | user_data, | ||

| ml_custom_easy_filter_h * | custom | ||

| ) |

Registers a custom filter.

NNStreamer provides an interface for processing the tensors with 'custom-easy' framework which can execute without independent shared object. Using this function, the application can easily register and execute the processing code. If a custom filter with same name exists, this will be failed and return the error code ML_ERROR_INVALID_PARAMETER. Note that if ml_custom_easy_invoke_cb() returns negative error values, the constructed pipeline does not work properly anymore. So developers should release the pipeline handle and recreate it again.

- Since :

- 6.0

- Remarks:

- If the function succeeds, custom handle must be released using ml_pipeline_custom_easy_filter_unregister().

- Parameters:

-

[in] name The name of custom filter. [in] in The handle of input tensors information. [in] out The handle of output tensors information. [in] cb The function to be called when the pipeline runs. [in] user_data Private data for the callback. This value is passed to the callback when it's invoked. [out] custom The custom filter handler.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER The parameter is invalid, or duplicated name exists. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory to register the custom filter.

Here is an example of the usage:

// Define invoke callback. static int custom_filter_invoke_cb (const ml_tensors_data_h in, ml_tensors_data_h out, void *user_data) { // Get input tensors using data handle 'in', and fill output tensors using data handle 'out'. } // The pipeline description (input data with dimension 2:1:1:1 and type int8 will be passed to custom filter 'my-custom-filter', which converts data type to float32 and processes tensors.) const char pipeline[] = "appsrc ! other/tensor,dimension=(string)2:1:1:1,type=(string)int8,framerate=(fraction)0/1 ! tensor_filter framework=custom-easy model=my-custom-filter ! tensor_sink"; int status; ml_pipeline_h pipe; ml_custom_easy_filter_h custom; ml_tensors_info_h in_info, out_info; ml_tensor_dimension dim = { 2, 1, 1, 1 }; // Set input and output tensors information. ml_tensors_info_create (&in_info); ml_tensors_info_set_count (in_info, 1); ml_tensors_info_set_tensor_type (in_info, 0, ML_TENSOR_TYPE_INT8); ml_tensors_info_set_tensor_dimension (in_info, 0, dim); ml_tensors_info_create (&out_info); ml_tensors_info_set_count (out_info, 1); ml_tensors_info_set_tensor_type (out_info, 0, ML_TENSOR_TYPE_FLOAT32); ml_tensors_info_set_tensor_dimension (out_info, 0, dim); // Register custom filter with name 'my-custom-filter' ('custom-easy' framework). status = ml_pipeline_custom_easy_filter_register ("my-custom-filter", in_info, out_info, custom_filter_invoke_cb, NULL, &custom); if (status != ML_ERROR_NONE) { // Handle error case. goto error; } // Construct the pipeline. status = ml_pipeline_construct (pipeline, NULL, NULL, &pipe); if (status != ML_ERROR_NONE) { // Handle error case. goto error; } // Start the pipeline and execute the tensor. ml_pipeline_start (pipe); error: // Destroy the pipeline and unregister custom filter. ml_pipeline_stop (pipe); ml_pipeline_destroy (pipe); ml_pipeline_custom_easy_filter_unregister (custom);

Unregisters the custom filter.

Use this function to release and unregister the custom filter.

- Since :

- 6.0

- Parameters:

-

[in] custom The custom filter to be unregistered.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER The parameter is invalid.

| int ml_pipeline_destroy | ( | ml_pipeline_h | pipe | ) |

Destroys the pipeline.

Use this function to destroy the pipeline constructed with ml_pipeline_construct().

- Since :

- 5.5

- Parameters:

-

[in] pipe The pipeline to be destroyed.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER The parameter is invalid. (Pipeline is not negotiated yet.) ML_ERROR_STREAMS_PIPE Failed to access the pipeline state.

- Precondition:

- The pipeline state should be ML_PIPELINE_STATE_PLAYING or ML_PIPELINE_STATE_PAUSED.

- Postcondition:

- The pipeline state will be ML_PIPELINE_STATE_NULL.

| int ml_pipeline_element_get_handle | ( | ml_pipeline_h | pipe, |

| const char * | element_name, | ||

| ml_pipeline_element_h * | elem_h | ||

| ) |

Gets an element handle in NNStreamer pipelines to control its properties.

- Since :

- 6.0

- Remarks:

- If the function succeeds, elem_h handle must be released using ml_pipeline_element_release_handle().

- Parameters:

-

[in] pipe The pipeline to be managed. [in] element_name The name of element to control. [out] elem_h The element handle.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

Here is an example of the usage:

ml_pipeline_h handle = nullptr; ml_pipeline_element_h demux_h = nullptr; gchar *pipeline; gchar *ret_tensorpick; int status; pipeline = g_strdup("videotestsrc ! video/x-raw,format=RGB,width=640,height=480 ! videorate max-rate=1 ! " \ "tensor_converter ! tensor_mux ! tensor_demux name=demux ! tensor_sink"); // Construct a pipeline status = ml_pipeline_construct (pipeline, NULL, NULL, &handle); if (status != ML_ERROR_NONE) { // handle error case goto error; } // Get the handle of target element status = ml_pipeline_element_get_handle (handle, "demux", &demux_h); if (status != ML_ERROR_NONE) { // handle error case goto error; } // Set the string value of given element's property status = ml_pipeline_element_set_property_string (demux_h, "tensorpick", "1,2"); if (status != ML_ERROR_NONE) { // handle error case goto error; } // Get the string value of given element's property status = ml_pipeline_element_get_property_string (demux_h, "tensorpick", &ret_tensorpick); if (status != ML_ERROR_NONE) { // handle error case goto error; } // check the property value of given element if (!g_str_equal (ret_tensorpick, "1,2")) { // handle error case goto error; } error: ml_pipeline_element_release_handle (demux_h); ml_pipeline_destroy (handle); g_free(pipeline);

| int ml_pipeline_element_get_property_bool | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| int32_t * | value | ||

| ) |

Gets the boolean value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The boolean value of given property.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_double | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| double * | value | ||

| ) |

Gets the floating point value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Remarks:

- This function supports all types of floating point values such as Double and Float.

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The floating point value of given property.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_enum | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| uint32_t * | value | ||

| ) |

Gets the enumeration value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Remarks:

- Enumeration value is get as an unsigned integer value and developers can get this information using gst-inspect tool.

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The unsigned integer value of given property, which is corresponding to Enumeration value.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_int32 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| int32_t * | value | ||

| ) |

Gets the integer value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The integer value of given property.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_int64 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| int64_t * | value | ||

| ) |

Gets the integer 64bit value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The integer 64bit value of given property.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_string | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| char ** | value | ||

| ) |

Gets the string value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The string value of given property. The caller is responsible for freeing the value using g_free().

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_uint32 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| uint32_t * | value | ||

| ) |

Gets the unsigned integer value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The unsigned integer value of given property.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_get_property_uint64 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| uint64_t * | value | ||

| ) |

Gets the unsigned integer 64bit value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [out] value The unsigned integer 64bit value of given property.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the third parameter is NULL.

| int ml_pipeline_element_release_handle | ( | ml_pipeline_element_h | elem_h | ) |

Releases the given element handle.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The handle to be released.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_pipeline_element_set_property_bool | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const int32_t | value | ||

| ) |

Sets the boolean value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The boolean value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not boolean.

| int ml_pipeline_element_set_property_double | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const double | value | ||

| ) |

Sets the floating point value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Remarks:

- This function supports all types of floating point values such as Double and Float.

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The floating point integer value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not floating point number.

| int ml_pipeline_element_set_property_enum | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const uint32_t | value | ||

| ) |

Sets the enumeration value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Remarks:

- Enumeration value is set as an unsigned integer value and developers can get this information using gst-inspect tool.

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The unsigned integer value to be set, which is corresponding to Enumeration value.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not unsigned integer.

| int ml_pipeline_element_set_property_int32 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const int32_t | value | ||

| ) |

Sets the integer value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The integer value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not integer.

| int ml_pipeline_element_set_property_int64 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const int64_t | value | ||

| ) |

Sets the integer 64bit value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The integer value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not integer.

| int ml_pipeline_element_set_property_string | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const char * | value | ||

| ) |

Sets the string value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The string value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not string.

| int ml_pipeline_element_set_property_uint32 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const uint32_t | value | ||

| ) |

Sets the unsigned integer value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The unsigned integer value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not unsigned integer.

| int ml_pipeline_element_set_property_uint64 | ( | ml_pipeline_element_h | elem_h, |

| const char * | property_name, | ||

| const uint64_t | value | ||

| ) |

Sets the unsigned integer 64bit value of element's property in NNStreamer pipelines.

- Since :

- 6.0

- Parameters:

-

[in] elem_h The target element handle. [in] property_name The name of the property. [in] value The unsigned integer 64bit value to be set.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given property name does not exist or the type is not unsigned integer.

| int ml_pipeline_flush | ( | ml_pipeline_h | pipe, |

| bool | start | ||

| ) |

Clears all data and resets the pipeline.

During the flush operation, the pipeline is stopped and after the operation is done, the pipeline is resumed and ready to start the data flow.

- Since :

- 6.5

- Parameters:

-

[in] pipe The pipeline to be flushed. [in] start trueto start the pipeline after the flush operation is done.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_STREAMS_PIPE Failed to flush the pipeline.

| int ml_pipeline_get_state | ( | ml_pipeline_h | pipe, |

| ml_pipeline_state_e * | state | ||

| ) |

Gets the state of pipeline.

Gets the state of the pipeline handle returned by ml_pipeline_construct().

- Since :

- 5.5

- Parameters:

-

[in] pipe The pipeline handle. [out] state The pipeline state.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. (Pipeline is not negotiated yet.) ML_ERROR_STREAMS_PIPE Failed to get state from the pipeline.

| int ml_pipeline_sink_register | ( | ml_pipeline_h | pipe, |

| const char * | sink_name, | ||

| ml_pipeline_sink_cb | cb, | ||

| void * | user_data, | ||

| ml_pipeline_sink_h * | sink_handle | ||

| ) |

Registers a callback for sink node of NNStreamer pipelines.

- Since :

- 5.5

- Remarks:

- If the function succeeds, sink_handle handle must be unregistered using ml_pipeline_sink_unregister().

- Parameters:

-

[in] pipe The pipeline to be attached with a sink node. [in] sink_name The name of sink node, described with ml_pipeline_construct(). [in] cb The function to be called by the sink node. [in] user_data Private data for the callback. This value is passed to the callback when it's invoked. [out] sink_handle The sink handle.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. (Not negotiated, sink_name is not found, or sink_name has an invalid type.) ML_ERROR_STREAMS_PIPE Failed to connect a signal to sink element. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

- Precondition:

- The pipeline state should be ML_PIPELINE_STATE_PAUSED.

| int ml_pipeline_sink_unregister | ( | ml_pipeline_sink_h | sink_handle | ) |

Unregisters a callback for sink node of NNStreamer pipelines.

- Since :

- 5.5

- Parameters:

-

[in] sink_handle The sink handle to be unregistered.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

- Precondition:

- The pipeline state should be ML_PIPELINE_STATE_PAUSED.

| int ml_pipeline_src_get_handle | ( | ml_pipeline_h | pipe, |

| const char * | src_name, | ||

| ml_pipeline_src_h * | src_handle | ||

| ) |

Gets a handle to operate as a src node of NNStreamer pipelines.

- Since :

- 5.5

- Remarks:

- If the function succeeds, src_handle handle must be released using ml_pipeline_src_release_handle().

- Parameters:

-

[in] pipe The pipeline to be attached with a src node. [in] src_name The name of src node, described with ml_pipeline_construct(). [out] src_handle The src handle.

- Returns:

- 0 on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_STREAMS_PIPE Failed to get src element. ML_ERROR_TRY_AGAIN The pipeline is not ready yet. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

| int ml_pipeline_src_get_tensors_info | ( | ml_pipeline_src_h | src_handle, |

| ml_tensors_info_h * | info | ||

| ) |

Gets a handle for the tensors information of given src node.

If the media type is not other/tensor or other/tensors, info handle may not be correct. If want to use other media types, you MUST set the correct properties.

- Since :

- 5.5

- Remarks:

- If the function succeeds, info handle must be released using ml_tensors_info_destroy().

- Parameters:

-

[in] src_handle The source handle returned by ml_pipeline_src_get_handle(). [out] info The handle of tensors information.

- Returns:

- 0 on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_STREAMS_PIPE The pipeline has inconsistent pad caps. (Pipeline is not negotiated yet.) ML_ERROR_TRY_AGAIN The pipeline is not ready yet.

| int ml_pipeline_src_input_data | ( | ml_pipeline_src_h | src_handle, |

| ml_tensors_data_h | data, | ||

| ml_pipeline_buf_policy_e | policy | ||

| ) |

Adds an input data frame.

- Since :

- 5.5

- Parameters:

-

[in] src_handle The source handle returned by ml_pipeline_src_get_handle(). [in] data The handle of input tensors, in the format of tensors info given by ml_pipeline_src_get_tensors_info(). This function takes ownership of the data if policy is ML_PIPELINE_BUF_POLICY_AUTO_FREE. [in] policy The policy of buffer deallocation. The policy value may include buffer deallocation mechanisms or event triggers for appsrc elements. If event triggers are provided, these functions will not give input data to the appsrc element, but will trigger the given event only.

- Returns:

- 0 on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_STREAMS_PIPE The pipeline has inconsistent pad caps. (Pipeline is not negotiated yet.) ML_ERROR_TRY_AGAIN The pipeline is not ready yet.

| int ml_pipeline_src_release_handle | ( | ml_pipeline_src_h | src_handle | ) |

Releases the given src handle.

- Since :

- 5.5

- Parameters:

-

[in] src_handle The src handle to be released.

- Returns:

- 0 on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_pipeline_src_set_event_cb | ( | ml_pipeline_src_h | src_handle, |

| ml_pipeline_src_callbacks_s * | cb, | ||

| void * | user_data | ||

| ) |

Sets the callbacks which will be invoked when a new input frame may be accepted.

Note that, the last installed callbacks on appsrc are available in the pipeline. If developer sets new callbacks, old callbacks will be replaced with new one.

- Since :

- 6.5

- Parameters:

-

[in] src_handle The source handle returned by ml_pipeline_src_get_handle(). [in] cb The app-src callbacks for event handling. [in] user_data The user's custom data given to callbacks.

- Returns:

- 0 on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

| int ml_pipeline_start | ( | ml_pipeline_h | pipe | ) |

Starts the pipeline, asynchronously.

The pipeline handle returned by ml_pipeline_construct() is started. Note that this is asynchronous function. State might be "pending". If you need to get the changed state, add a callback while constructing a pipeline with ml_pipeline_construct().

- Since :

- 5.5

- Parameters:

-

[in] pipe The pipeline handle.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. (Pipeline is not negotiated yet.) ML_ERROR_STREAMS_PIPE Failed to start the pipeline.

- Precondition:

- The pipeline state should be ML_PIPELINE_STATE_PAUSED.

- Postcondition:

- The pipeline state will be ML_PIPELINE_STATE_PLAYING.

| int ml_pipeline_stop | ( | ml_pipeline_h | pipe | ) |

Stops the pipeline, asynchronously.

The pipeline handle returned by ml_pipeline_construct() is stopped. Note that this is asynchronous function. State might be "pending". If you need to get the changed state, add a callback while constructing a pipeline with ml_pipeline_construct().

- Since :

- 5.5

- Parameters:

-

[in] pipe The pipeline to be stopped.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. (Pipeline is not negotiated yet.) ML_ERROR_STREAMS_PIPE Failed to stop the pipeline.

- Precondition:

- The pipeline state should be ML_PIPELINE_STATE_PLAYING.

- Postcondition:

- The pipeline state will be ML_PIPELINE_STATE_PAUSED.

| int ml_pipeline_switch_get_handle | ( | ml_pipeline_h | pipe, |

| const char * | switch_name, | ||

| ml_pipeline_switch_e * | switch_type, | ||

| ml_pipeline_switch_h * | switch_handle | ||

| ) |

Gets a handle to operate a "GstInputSelector"/"GstOutputSelector" node of NNStreamer pipelines.

Refer to https://gstreamer.freedesktop.org/data/doc/gstreamer/head/gstreamer-plugins/html/gstreamer-plugins-input-selector.html for input selectors. Refer to https://gstreamer.freedesktop.org/data/doc/gstreamer/head/gstreamer-plugins/html/gstreamer-plugins-output-selector.html for output selectors.

- Since :

- 5.5

- Remarks:

- If the function succeeds, switch_handle handle must be released using ml_pipeline_switch_release_handle().

- Parameters:

-

[in] pipe The pipeline to be managed. [in] switch_name The name of switch (InputSelector/OutputSelector). [out] switch_type The type of the switch. If NULL, it is ignored. [out] switch_handle The switch handle.

- Returns:

- 0 on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

| int ml_pipeline_switch_get_pad_list | ( | ml_pipeline_switch_h | switch_handle, |

| char *** | list | ||

| ) |

Gets the pad names of a switch.

- Since :

- 5.5

- Remarks:

- If the function succeeds, list and its contents should be released using g_free(). Refer the below sample code.

- Parameters:

-

[in] switch_handle The switch handle returned by ml_pipeline_switch_get_handle(). [out] list NULL terminated array of char*. The caller must free each string (char*) in the list and free the list itself.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_STREAMS_PIPE The element is not both input and output switch (Internal data inconsistency). ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

Here is an example of the usage:

int status; gchar *pipeline; ml_pipeline_h handle; ml_pipeline_switch_e switch_type; ml_pipeline_switch_h switch_handle; gchar **node_list = NULL; // pipeline description pipeline = g_strdup ("videotestsrc is-live=true ! videoconvert ! tensor_converter ! output-selector name=outs " "outs.src_0 ! tensor_sink name=sink0 async=false " "outs.src_1 ! tensor_sink name=sink1 async=false"); status = ml_pipeline_construct (pipeline, NULL, NULL, &handle); if (status != ML_ERROR_NONE) { // handle error case goto error; } status = ml_pipeline_switch_get_handle (handle, "outs", &switch_type, &switch_handle); if (status != ML_ERROR_NONE) { // handle error case goto error; } status = ml_pipeline_switch_get_pad_list (switch_handle, &node_list); if (status != ML_ERROR_NONE) { // handle error case goto error; } if (node_list) { gchar *name = NULL; guint idx = 0; while ((name = node_list[idx++]) != NULL) { // node name is 'src_0' or 'src_1' // release name g_free (name); } // release list of switch pads g_free (node_list); } error: ml_pipeline_switch_release_handle (switch_handle); ml_pipeline_destroy (handle); g_free (pipeline);

| int ml_pipeline_switch_release_handle | ( | ml_pipeline_switch_h | switch_handle | ) |

Releases the given switch handle.

- Since :

- 5.5

- Parameters:

-

[in] switch_handle The handle to be released.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_pipeline_switch_select | ( | ml_pipeline_switch_h | switch_handle, |

| const char * | pad_name | ||

| ) |

Controls the switch with the given handle to select input/output nodes(pads).

- Since :

- 5.5

- Parameters:

-

[in] switch_handle The switch handle returned by ml_pipeline_switch_get_handle(). [in] pad_name The name of the chosen pad to be activated. Use ml_pipeline_switch_get_pad_list() to list the available pad names.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_pipeline_tensor_if_custom_register | ( | const char * | name, |

| ml_pipeline_if_custom_cb | cb, | ||

| void * | user_data, | ||

| ml_pipeline_if_h * | if_custom | ||

| ) |

Registers a tensor_if custom callback.

If the if-condition is complex and cannot be expressed with tensor_if expressions, you can define custom condition.

- Since :

- 6.5

- Remarks:

- If the function succeeds, if_custom handle must be released using ml_pipeline_tensor_if_custom_unregister().

- Parameters:

-

[in] name The name of custom condition [in] cb The function to be called when the pipeline runs. [in] user_data Private data for the callback. This value is passed to the callback when it's invoked. [out] if_custom The tensor_if handler.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER The parameter is invalid. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory to register the custom callback. ML_ERROR_STREAMS_PIPE Failed to register the custom callback.

- Warning:

- A custom condition of the tensor_if is registered to the process globally. If the custom condition "X" is registered, this "X" may be referred in any pipelines of the current process. So, be careful not to use the same condition name when using multiple pipelines.

Here is an example of the usage:

// Define callback for tensor_if custom condition. static int tensor_if_custom_cb (const ml_tensors_data_h data, const ml_tensors_info_h info, int *result, void *user_data) { // Describe the conditions and pass the result. // Result 0 refers to FALSE and a non-zero value refers to TRUE. *result = 1; // Return 0 if there is no error. return 0; } // The pipeline description (input data with dimension 2:1:1:1 and type int8 will be passed to tensor_if custom condition. Depending on the result, proceed to true or false paths.) const char pipeline[] = "appsrc ! other/tensor,dimension=(string)2:1:1:1,type=(string)int8,framerate=(fraction)0/1 ! tensor_if name=tif compared-value=CUSTOM compared-value-option=tif_custom_cb_name then=PASSTHROUGH else=PASSTHROUGH tif.src_0 ! tensor_sink name=true_condition async=false tif.src_1 ! tensor_sink name=false_condition async=false" int status; ml_pipeline_h pipe; ml_pipeline_if_h custom; // Register tensor_if custom with name 'tif_custom_cb_name'. status = ml_pipeline_tensor_if_custom_register ("tif_custom_cb_name", tensor_if_custom_cb, NULL, &custom); if (status != ML_ERROR_NONE) { // Handle error case. goto error; } // Construct the pipeline. status = ml_pipeline_construct (pipeline, NULL, NULL, &pipe); if (status != ML_ERROR_NONE) { // Handle error case. goto error; } // Start the pipeline and execute the tensor. ml_pipeline_start (pipe); error: // Destroy the pipeline and unregister tensor_if custom. ml_pipeline_stop (pipe); ml_pipeline_destroy (pipe); ml_pipeline_tensor_if_custom_unregister (custom);

| int ml_pipeline_tensor_if_custom_unregister | ( | ml_pipeline_if_h | if_custom | ) |

Unregisters the tensor_if custom callback.

Use this function to release and unregister the tensor_if custom callback.

- Since :

- 6.5

- Parameters:

-

[in] if_custom The tensor_if handle to be unregistered.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER The parameter is invalid. ML_ERROR_STREAMS_PIPE Failed to unregister the custom callback.

| int ml_pipeline_valve_get_handle | ( | ml_pipeline_h | pipe, |

| const char * | valve_name, | ||

| ml_pipeline_valve_h * | valve_handle | ||

| ) |

Gets a handle to operate a "GstValve" node of NNStreamer pipelines.

Refer to https://gstreamer.freedesktop.org/data/doc/gstreamer/head/gstreamer-plugins/html/gstreamer-plugins-valve.html for more information.

- Since :

- 5.5

- Remarks:

- If the function succeeds, valve_handle handle must be released using ml_pipeline_valve_release_handle().

- Parameters:

-

[in] pipe The pipeline to be managed. [in] valve_name The name of valve (Valve). [out] valve_handle The valve handle.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid. ML_ERROR_OUT_OF_MEMORY Failed to allocate required memory.

| int ml_pipeline_valve_release_handle | ( | ml_pipeline_valve_h | valve_handle | ) |

Releases the given valve handle.

- Since :

- 5.5

- Parameters:

-

[in] valve_handle The handle to be released.

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.

| int ml_pipeline_valve_set_open | ( | ml_pipeline_valve_h | valve_handle, |

| bool | open | ||

| ) |

Controls the valve with the given handle.

- Since :

- 5.5

- Parameters:

-

[in] valve_handle The valve handle returned by ml_pipeline_valve_get_handle(). [in] open trueto open(let the flow pass),falseto close (drop & stop the flow).

- Returns:

0on success. Otherwise a negative error value.

- Return values:

-

ML_ERROR_NONE Successful. ML_ERROR_NOT_SUPPORTED Not supported. ML_ERROR_INVALID_PARAMETER Given parameter is invalid.